We Keep Making AI Wear an Org Chart

I’m Brian Baldock, a Senior Software Engineer at Microsoft with over a decade of experience in cybersecurity, cloud technology, and Microsoft 365 deployments. My career has been shaped by a passion for solving complex technical challenges, driving digital transformation, and exploring the frontiers of AI and large language models (LLMs). Beyond my work at Microsoft, I spend my time experimenting in my home lab, writing about the latest in cybersecurity, and sharing blueprints to help others navigate the evolving digital landscape.

Why are we making this new amazing thing imitate the old thing?

I keep seeing the same demos with different clothes on.

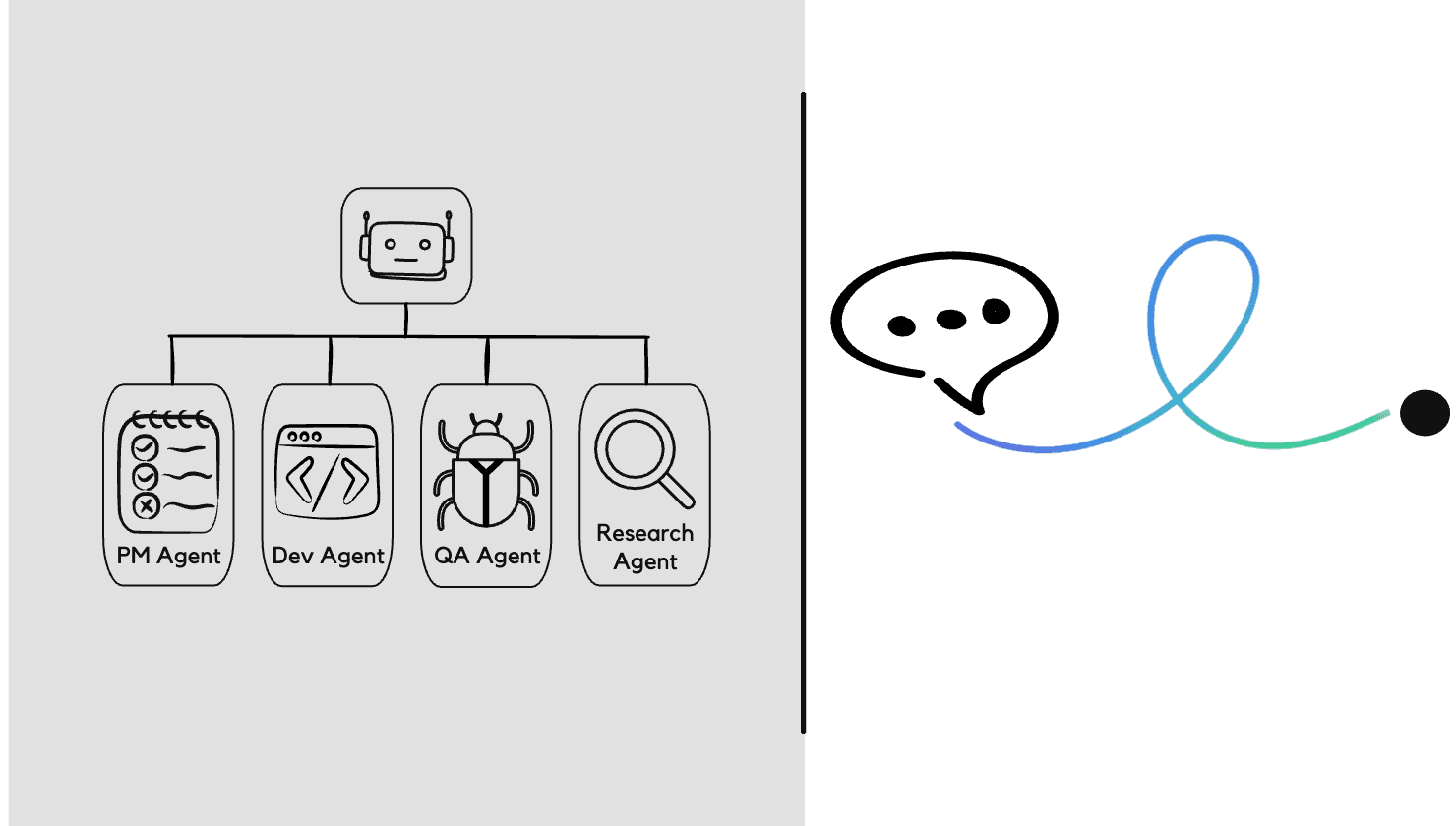

Program Manager Agent + Project Manager Agent + Developer Agent(s) + QA Agent + Research Agent and so on... There's also a nice clean dashboard so that we can watch and monitor all the fake people doing their tasks in this fake company we just created. We can see them creating user stories, epics, tickets, handoffs, status updates and approval flows all with ~ wait for it ~ human in the loop *ding ding ding. Basically all the same machinery that we already have, except with AI taped to the front of it.

And honestly, every time I see it, I have the same reaction, "Why are we making this new amazing thing imitate the old thing?

Before you burn me at the stake, don't get me wrong, I'm not saying that it's useless. Some of it is super useful. Some of it will most likely save people a ton of time!

But I can't be the only person that feels like something is off. Like we took something genuinely strange, powerful, likely even category-breaking and have immediately forced it to fill out forms, attend standups and enumerate tickets?

That cannot be the destination.

We are still in the skeuomorphic phase

I think that is the part that has been bugging me for the last month or so.

A lot of these structures are not sacred truths, they're coping mechanisms.

We're still in this skeuomorphic phase of AI. We keep giving it the shape of the systems we already understand because we don't know what it's native shape actually is.

So, we reach for the org chat. We reach for the workflow diagram, the dashboard, the interface. We make it legible by making it look like us.

This is completely understandable. It's what people always do with new technology at first. But it is also, maybe, the least interesting thing we could do with AI.

A lot of the current wave feels like we are trying to figure out a way to treat AI as cheap labor. So we assign it roles, we manage it and split it into functions and build little robot departments.

Maybe that works well enough for demos and maybe it even works well enough for some real workflows. But I don't think it gets us to the deeper opportunity.

Because a lot of these structures are not sacred truths, they're coping mechanisms.

Humans get tired and forget context. Humans specialize because no single person can hold the whole moving mess in their head forever. Org charts, handoffs, and process layers exist partly because human attention is limited.

That's the right answer for human systems, and I'm saying it may not be the right answer for AI-native systems.

The scarce thing is not labor

The ends of that loop are the messy parts.

This is where I think the conversation usually goes sideways. We keep acting like the scarce thing is labor. Typing, coding, producing documents, summarizing articles, making more stuff etc. I do not think that is the real bottleneck.

I think the scarce thing is and will continue to be coherent attention across time.

Real work is not just "answer the question" ~ Real work is about the full loop;

Current AI is pretty good in the middle of that loop, it can reason, write, summarize, draft, code, brainstorm, whatever.

The ends of that loop is the messy parts. Getting the AI to stick with the same objective overtime or remembering what mattered three weeks ago. Noticing what actually changed in reality and not just in the prompt. Tracking whether the results actually worked, changing the behavior based on the consequences and knowing when to act or when to escalate.

That's still where things fall apart, and I think that's exactly why so much of today's AI landscape feels weirdly fake. Not because the models are weak, but because the surrounding architecture is still borrowing legitimacy from old software and old org charts.

So what is actually missing?

The real frontier is continuity, consequence, and persistence.

The missing thing is not another interface. It's continuity under consequence. That is the phrase that I keep coming back to. Not more agent avatars, not more swarm dashboards. Not more elaborate puppet shows where fake employees hand work to each other while a human watches the parade.

Continuity.

Consequence.

Persistence.

Something that can stay with the problem, hold context, goals, constraints, decisions and unresolved tension over time. Something that can see whether the thing it did actually helped or hurt. Something that can learn from reality instead of living entirely inside a prompt window.

That is a lot closer to the real frontier.

So instead of:

"Here is my PM agent and my dev agent and my QA agent."

You get instead:

"Help me reduce onboarding drop-off without increasing support burden. Stay with that objective, watch what changes. Cluster the feedback items, compare the results to release. Suggest safe experiments and escalate the one tradeoff that actually needs a human."

That is different. That is not AI pretending to be part of the org chart. That is AI attached to an outcome. And yes, that matters.

Because if I do not have time to read an article, I probably don't need the podcast version of the same article either. I don't think that's leverage, it's cool for sure, but seems like format conversion.

The better system would say:

Out of everything that happened this week, here are the two things that materially change your assumptions, here is why, here is what they affect, and here is what you can ignore. Some things already do something similar, thinking summaries by M365 Copilot etc. We're getting closer to solving that overarching feeling of what's wrong but there is still work to do.

Yeah, I still like chat

Chat or the accessible equivalent in the front. Structured state underneath.

This part matters because I do not think building a bigger interface is the answer. I like chatting with AI, I like typing, I don't really want to talk out loud most of the time; that, for some reason, to a chat bot still feels weird to me, honestly. I also do not want to become the full-time administrator of my own prompt library, skill repository or agent zoo. Don't get me wrong, I do write skills, then I forget what I wrote and just end up prompting anyways.

Maybe that sounds like a bad habit but I don't think it is. I think it's a design clue.

Most people don't want to think like software and they don't want to structure everything perfectly before the machine can help them. So maybe the future is not humans learning to think more like system. The future is system learning how to effectively participate in human thought without constantly demanding structure or the next thing from the human.

Chat or the accessible equivalent in front. Structured state underneath. You talk naturally and the system quietly tracks your goals, contraints, prior decisions, open loops, what changed, what is worth remembering, what can be reused, what actions are safe and what actually deserves your attention!

That feels right to me, or at least a lot more right then another dashboard filled with more distractions.

So what does this thing look like?

Objectives first. Not noise, not theater, not forty-seven updates pretending to be useful. Just the delta with consequence.

Great, you made it this far through my meandering critiques of current state and are curious what I actually think the future could look like. Well, for starters, I don't think it starts life as a new operating system in the traditional sense.

It probably starts as an application or a workspace maybe (yeah I know but let's face it, we need a layer somewhere to get started). This sits on top of your existing tools. But in practice, it starts behaving like an operating layer for intent.

A normal OS manages CPU, memory, files, permissions, and processes. This kind of layer would manage goals, context, memory, priorities, permissions, actions and escalations.

Not tabs first, not apps first, not documents first.

Objectives first.

You open it and it tells you:

What materially changed

What matters now

What it already handled

What still needs your judgement

Not noise, not theater, not forty-seven updates pretending or trying to be useful. Just the delta with consequence. A quiet system should be able to say:

There are only two things worth your attention this morning.

I handled the reversible parts.

This one remaining choice changes the risk profile, so you need to decide.

That is a very different product shape. Less assistant as entertainer, more assistant as steward.

Should we even build this?

Otherwise we are not building a trustworthy thinking partner. We are just building a more ambient form of manipulation.

Honestly, this part matters the most in my opinion.

Just because we can build a more persistent, more capable, more action-taking layer does not automatically mean we should build it.

If this becomes another manipulation engine, another attention trap, another system optimized to keep us engaged instead of actually helping us think, then no, I do not want it.

The human has to stay the principal. Not the product.

That means a few things have to be true. Memory should be inspectable, editable and erasable. Actions should be bounded, logged and reversible. The system should be able to explain why it acted, what evidence it used, and where uncertainty still exists, but, it should stay quiet by default. It should interrupt for consequence, not for engagement.

Otherwise we are not building a trustworthy thinking partner. We are just building a more ambient form of manipulation.

We can still get this right. The missing thing is not "build a whole aI OS." The opening is discovering the right behavior.

That could be AI memory that doesn't feel creepy, or a delta engine that only surfaces meaningful change, or potentially a safe action layer with real auditability or it's a domain steward attached to one outcome that people actually care about. This is still a wide open space.

AI still needs a native shape

We haven't found it yet, but we're getting close and I think we are starting to notice and feel where it should be.

I don't think the next frontier is another fake org chart made of bots or a prettier interface for the same old process either. I think the next frontier is persistent outcome loops that looks something like the following:

A quieter layer

A memory layer

An intent layer

A consequence layer

Something that makes the conversation actually mean something across time because right now a lot of AI feels like we took something genuinely new and immediately made it imitate the shape of old work because that's what's comfortable.

I think the biggest opportunity we have right now is figuring out what work actually looks like when memory, reasoning, action, and adaptation all live in the same system and the human is governing intent instead of translating intent into process all day long.

That is the thing I keep circling, the thing in the back of my mind that feels off about where we are currently, and that, I think, is the thing that is just barely out of view.

AI does not need more theater, it needs a native shape.

We havent found it yet, but we're getting close and I think we are starting to notice and feel where it should be.